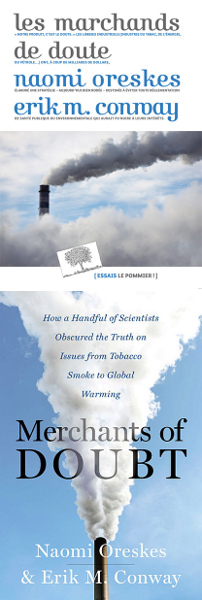

I just finished the book "les marchands de doute" ("Merchants of Doubt") by Naomi Oreskes et Erik M. Conway. It took me some weeks because I read so sparsely, but I'm glad I did.

I just finished the book "les marchands de doute" ("Merchants of Doubt") by Naomi Oreskes et Erik M. Conway. It took me some weeks because I read so sparsely, but I'm glad I did.

Since by nature I like thinking in terms of doubts, I know I could get easily influenced by some skeptical arguments on Global warming. Arguments read or heard every now and then from friends, colleagues or randomly on the internet. Indeed, I think that the "Cartesian doubt" approach is fruitful when approaching a new research topic.

However, the book "Merchants of Doubt" sheds the light on an unfruitful kind of doubt I was unaware of: the unfair doubt, the doubt on a topic where a reasonable scientific consensus is already reached, the doubt that is overly supported by people which feels threatened by those scientific results. Such doubt is purported beyond reason in order to give a false impression to the general public. The impression that there is still an active scientific debate when this debate is actually long gone (or moved to minor details of the question).

I believe "Merchants of Doubt" is the outcome of a tremendous work of historical research from its authors. Naomi Oreskes et Erik M. Conway show the unexpected connection between the doubt on the dangers of Tobacco, Pesticides, Ozone depletion and Climate change in general. Indeed, it's quite incredible to learn that some of the people who were constantly harassing scientific results (and sometimes scientists themselves!) on those pretty different topics were actually the same people!

Just as a pointer, Naomi Oreskes and Jacques Treiner (translator of the French edition) were invited on the radio program Science publique just a year ago, when the French edition was published.

As a result this reading, I have now downloaded some of the IPCC reports on Climate change! I doubt I'll take the time to read those entirely, but my first very positive impression was on how clear their writing is. Figures are clearly presented and there is a very systematic approach in the treatment of uncertainty (since there is always some) by the use of a precisely defined vocabulary (like "Virtually certain", "Extremely likely", "More likely than not", depending on clearly expressed probability thresholds). This is clearly in contrast to the crude approach of the "Merchants of Doubt"...